Towards Self-Driving Codebases

A GAN-inspired framework for self-healing code

TL;DR: Higharc’s AI Special Projects team is applying concepts from Generative Adversarial Networks (GANs) to software engineering. By pitting two specialized AI agents against each other, we’ve created an autonomous system for “self-healing” code.

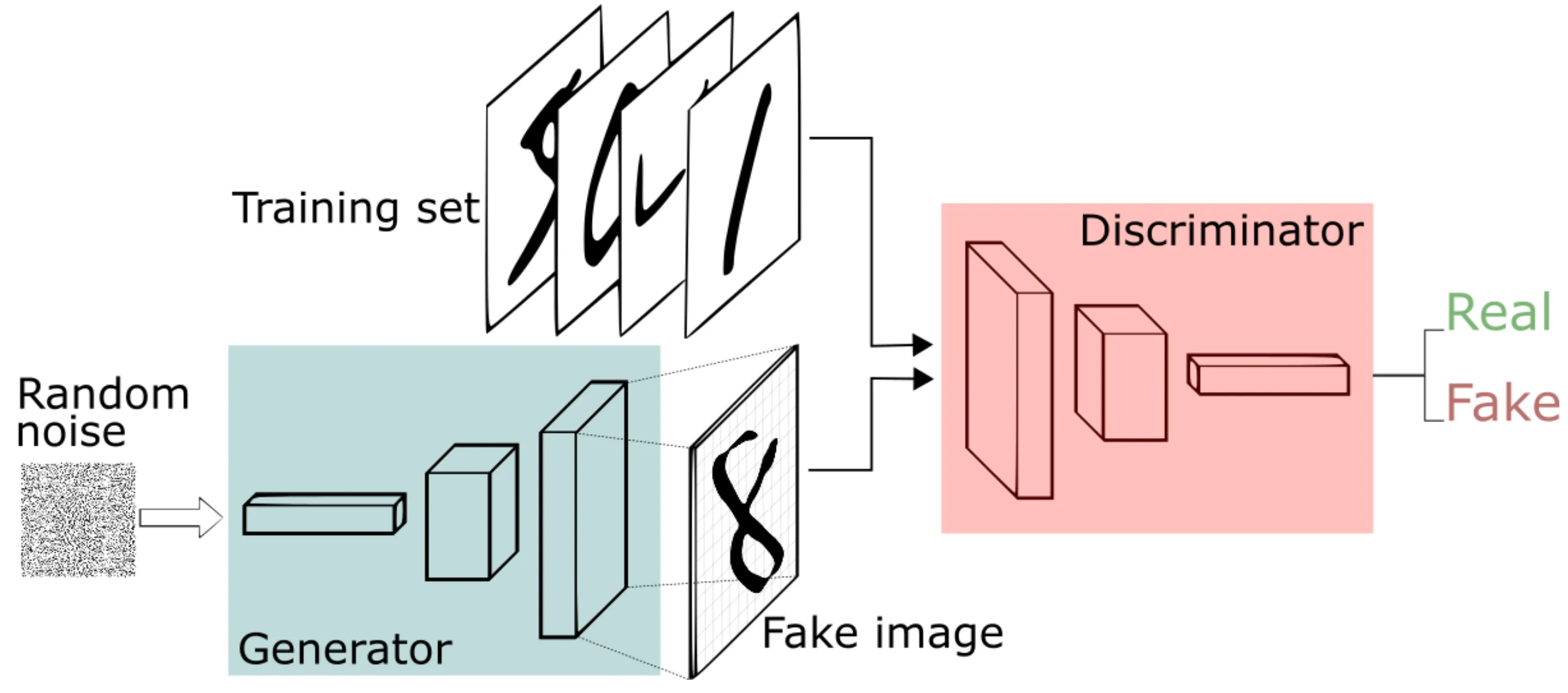

Generative Adversarial Networks

In 2014, Ian Goodfellow and co released a landmark paper introducing Generative Adversarial Networks (GANs). This framework pits two neural networks against one another: a Generator, which learns to model a dataset’s distribution to create synthetic data, and a Discriminator, a binary classifier that skeptically evaluates if an image is real or fake.

During training, both adversaries simultaneously improve through iterative optimization. Eventually, the Generator has learned to produce data so realistic that the Discriminator can no longer distinguish generated images from those selected from the training set.

Generative Adversarial Networks — Img credit: Thalles Santos Silva

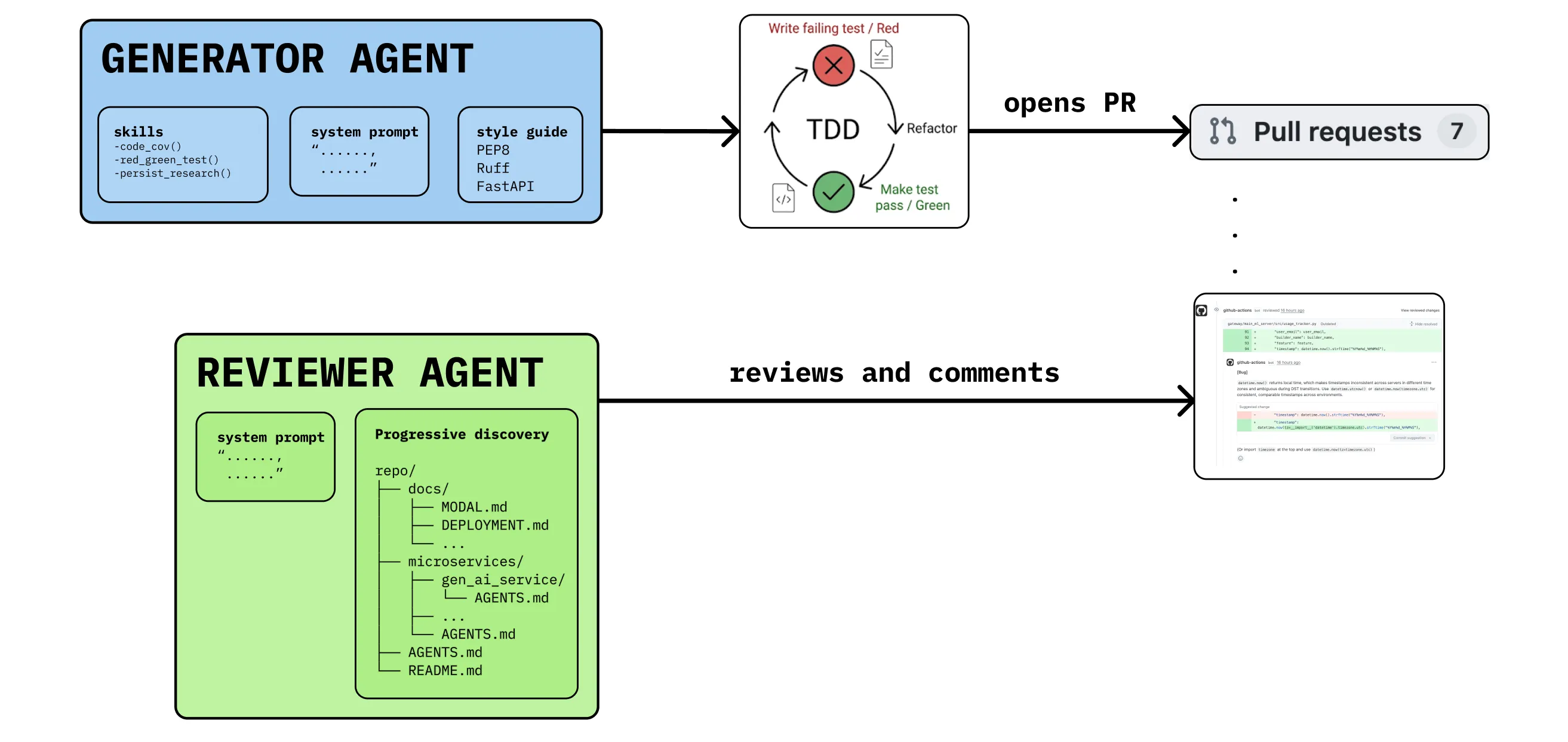

Generator-Reviewer Adversaries

Let’s apply this adversarial logic to Harness Engineering, the practice of building the scaffolding, tests, and observability for autonomous agents to operate reliably.

Our framework relies on an agent-to-agent system for self-healing code. In this arrangement, the Generator is an autonomous cloud agent, specialized in finding vulnerabilities, under-tested code, and other fragile implementations. It runs on a cron-job recurrence interval, generating corrective pull requests (PRs).

Ideally, the Generator’s code should be easy to audit, with minimal cognitive load. The Generator follows a red/green test driven development (TDD) framework, generating failing test cases prior to implementing a passing solution.

The Discriminator, an adversarial cloud review agent, uses progressive discovery of specs and AGENTS.md files, to critically evaluate the Generator’s proposed diffs.

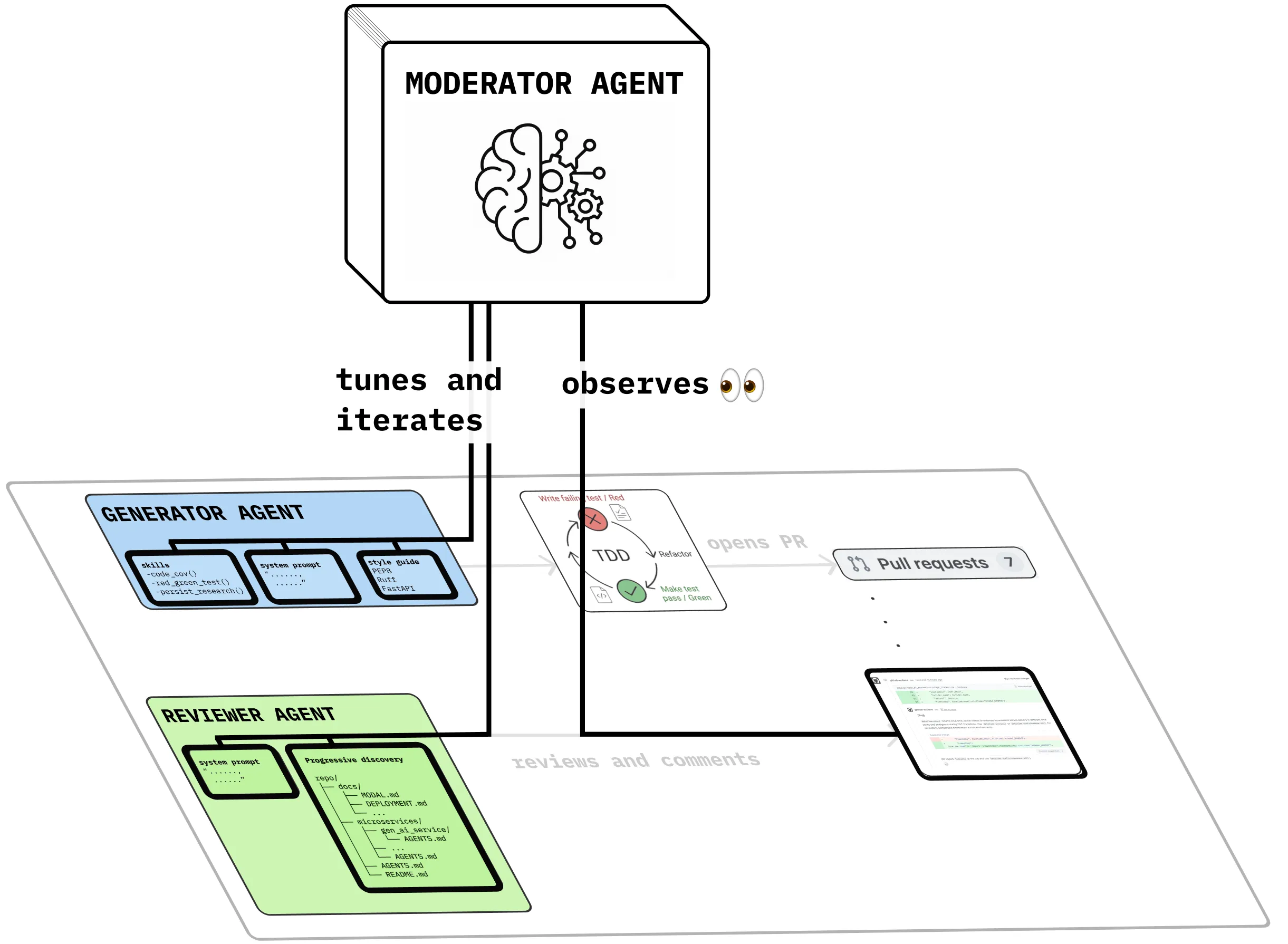

Moderator Agent

As the Generator-Discriminator (GD) pair clash in blocking reviews, a third agent, the Moderator, behaves as a watchful observer. Tensions between the GD expose pockets of poor agent legibility, the ease with which an AI agent can parse, understand, and navigate a codebase. The Moderator agent identifies and improves agentic scaffolding as needed.

Human observation and intervention is critical here too, where specs, skills, and AGENTS.md files are treated as first-class artifacts. GANs iterate towards “indistinguishability”; our systems aim for PR merge-ability.

the Moderator agent tunes behaviors of the Generator, Reviewer agents

Does this system work reliably?

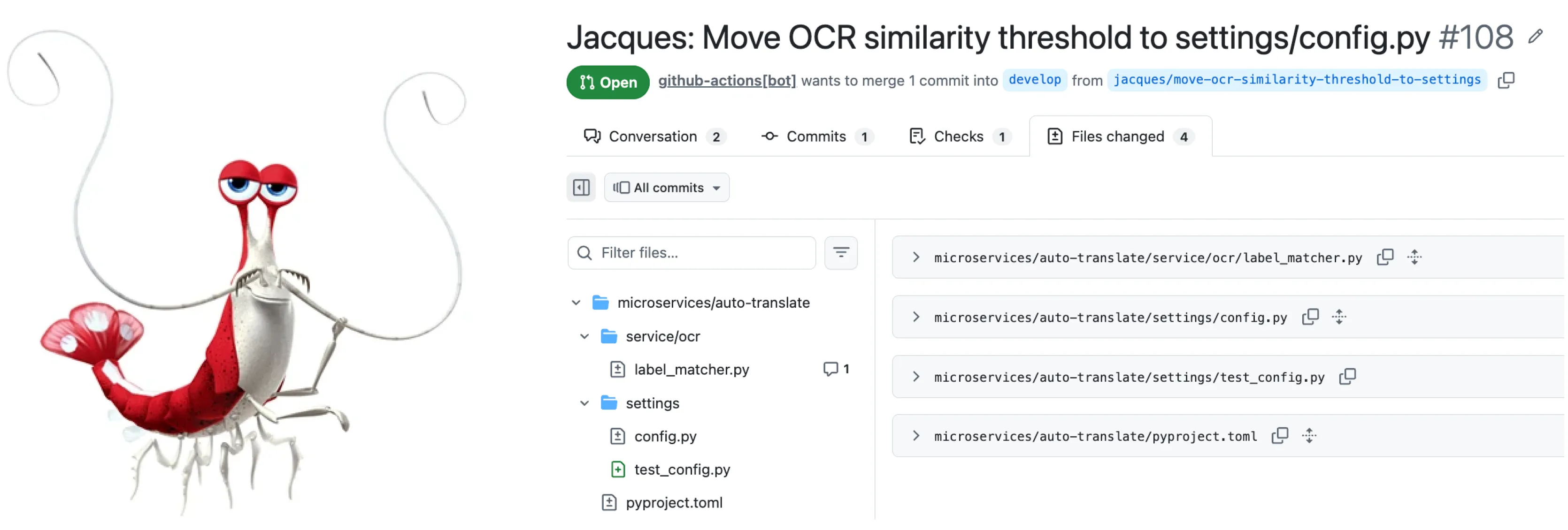

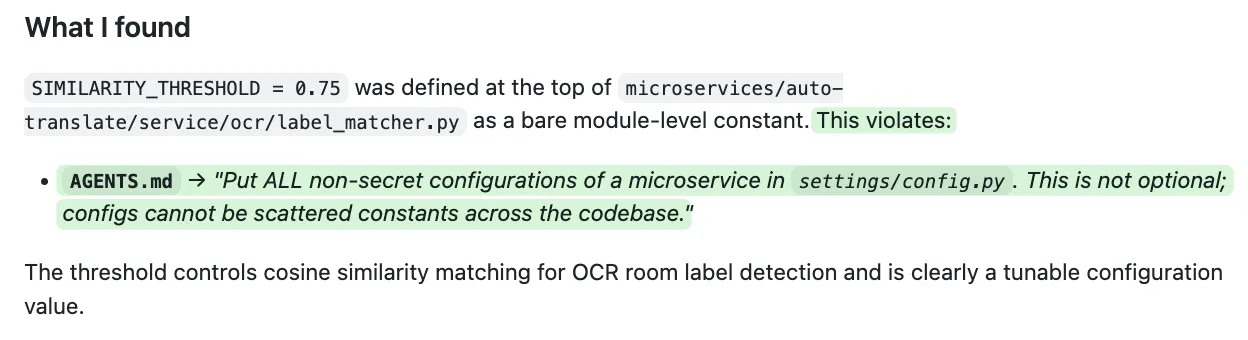

After tuning our harnesses, 75% of the Generator’s pull requests are merged exactly as-is. In this example PR, we can see that our codebase cleaning agent, Jacques-the-shrimp, autonomously:

- Found a magic variable buried in a microservice

- Authored failing tests, in anticipation of the upcoming refactor

- Iterated on the code until all tests passed (and added testing config in

.toml) - Persisted his RED/GREEN testing logs in a filesystem scratchpad

- Presented a concise PR for final review by the Reviewer agent

Tech stack

Jacques-the-shrimp runs on ephemeral runners via GitHub Actions, triggered by scheduled cron-jobs. The runtime stack is typical Python tooling (uv, tox, Poetry, coverage), with Claude CLI installed onto the runner. We use Braintrust for observability and traces, and integrate Slack notifications at completion.

GitHub PATs allow PR preparation to trigger a review from our PR review agent, which follows a pattern of progressive discovery of AGENTS.md files for a rubric to review against. The gold standard is ensuring both the Generator and Reviewer cite specific lines from your AGENTS.md files. If you transition to open-weights models, this is the exact behavior to encourage through Reinforcement Learning (RL).

Generator agent cites specific clauses in the AGENTS.md

Jacques’ skills

Uncovering under-tested code: Jacques generates code test coverage reports, persisting them to a local filesystem scratchpad. This allows him to find “dark corners” of untested services.

Trawling for architectural drift: Jacques identifies where code implementation has diverged from aspirational specs and patterns.

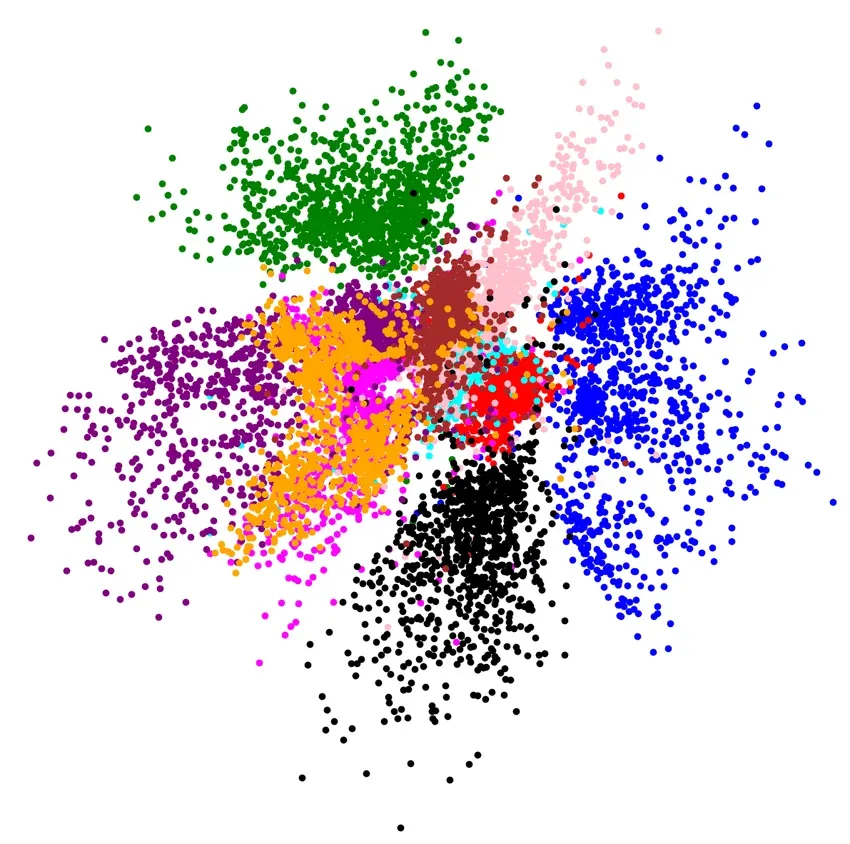

Decomposing long functions: by mapping code logic to more precise pockets of latent space, Jacques improves semantic search results on an indexed codebase (citation). Smaller chunks reduce noise.

2D projection of latent space (source)

Lessons learned

Don’t guess: add observability first, before your system even works end-to-end. Use this to understand how your agent greps and globs to get oriented in your codebase. See if you can eliminate some of these initial tool calls with a concise repo map in your system prompt or AGENTS.md.

Don’t overcomplicate it: while a multi-agent choreography will impress a non-technical audience, what you really need is a simple system that works consistently. Ignore the hype — make sure your framework is robust and easy to reason through, before introducing complexity.

Get to know your model: the agentic legibility of your codebase depends on the model; understanding where your preferred model stumbles, and how much scaffolding it needs, is critical. Remember that the field moves quickly, and your learnings won’t always map to new model releases.

What’s Next

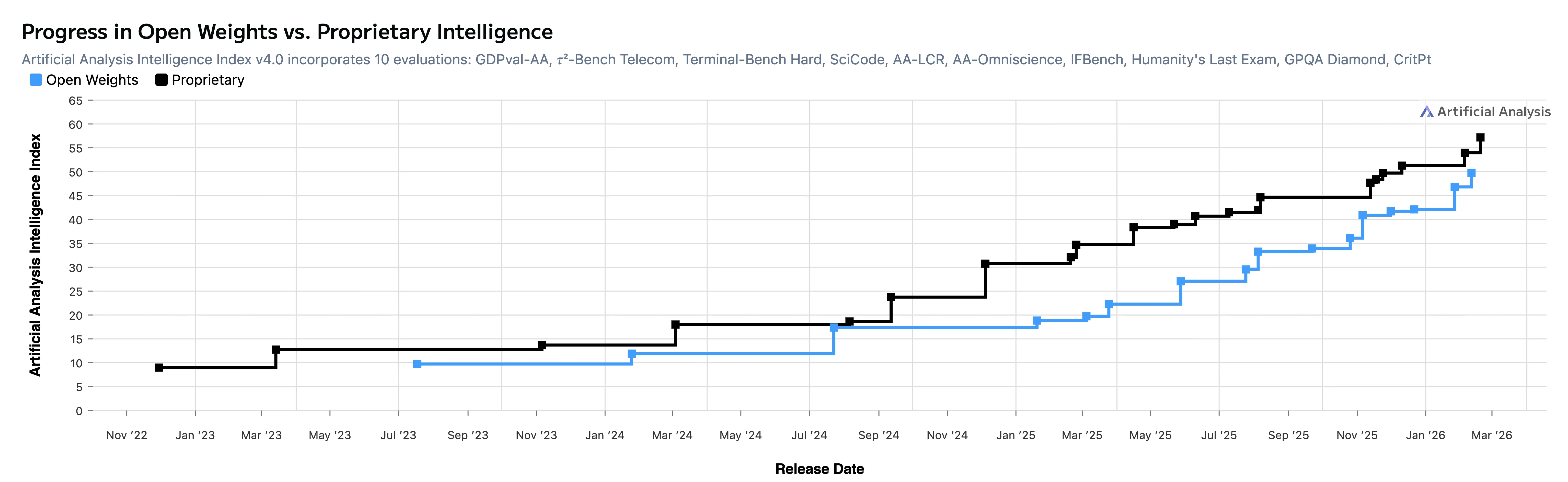

Our GD agents are powered by Anthropic’s Opus 4.6. As Open Weights models continue to close the intelligence gap behind state of the art closed models, we anticipate further experimentation into fine-tuning and model distillation.

Baking in preferred code-review behaviors (like citing AGENTS.md files as inline comments) into model weights would take us from “I hope it abides by the prompt”, to inherent alignment. Powering our agentic linting models with fine-tuned weights should prove faster and more deterministic, with cheaper inference.

Join us

At Higharc, the AI Special Projects team is our research arm, focused on delivering bleeding-edge AI in residential construction. We develop products at the intersection of reinforcement learning, computer vision, LLMs, and 3D geometry.

If you’re excited by autonomous agents, self-healing codebases, or applying AI to hard problems in the physical world, we’d love to connect.

See our open roles.